An ex-xAI engineer uploads Grok's entire codebase to OpenAI, cashes out $7 million in stock, and walks away. xAI sues. The case may force OpenAI to prove its models aren't built on stolen tech. The headlines are about lawsuits and stolen IP — but the lesson buried underneath should rewrite every CISO's AI threat model.

Forget “AI hallucinations.” The real risk is human plot twists.

The plot twist that should worry every CISO

AI conversations almost always frame risk in terms of the model: will it hallucinate? will it leak training data? will it generate biased output? Those are real questions, but they're designer problems — they get fixed in the next model version, the next fine-tune, the next guardrail.

The xAI / OpenAI story is something different. The risk wasn't the model. It was the engineer with legitimate access who copied the codebase to a USB drive, walked out the door, and showed up at a competitor with a $7M signing bonus.

That's an insider-risk story dressed up in AI clothing. And it's already happening every day at smaller scale — every time an employee pastes a customer list into ChatGPT, every time a developer uploads proprietary code to a chatbot for help, every time a junior analyst sends a confidential deck to Claude for a summary.

The flashy court case is the exception. The everyday version is the iceberg.

What this means for everyone else

Most companies aren't losing secrets to robots. They're leaking through employees who copy, paste, or overshare. The pattern is identical to every insider-risk incident of the last twenty years — but the new tools make it faster, easier, and higher-volume.

Three things have changed:

- The friction dropped to zero. Sharing data with a competitor used to require effort — burning a CD, sending an email, exporting a database. Now it's a paste into a chat box that takes three seconds.

- The destination is unbounded. A copied file ended up on one machine. A pasted prompt ends up in a multi-billion-parameter model that may surface the same content to an unrelated user tomorrow.

- The intent is often innocent. The xAI engineer knew what they were doing. The 99% of enterprise data-leak incidents we see are people trying to be productive. That makes them harder to deter, harder to detect, and harder to prosecute.

Three vectors of human-driven AI data loss

The leaving employee

The xAI case sits here. An employee with legitimate access decides to take the data with them on the way out. Historically this involved physical media; now it can involve uploading source code to ChatGPT for “help debugging” on the last day of work, then accessing those conversations later.

Defence: identify the high-IP roles, monitor AI usage patterns specifically in their last 60 days, require explicit data classification for all uploads, lean on retention policies that make the conversation history reviewable.

The curious employee

The most common pattern. Someone discovers that pasting a complex internal document into Claude gives a beautifully formatted summary in five seconds. They start doing it routinely. The data they paste includes customer names, financial figures, contracts, internal strategy — all of it now in a third-party LLM's training-eligible context.

These people aren't bad actors. They're your highest performers. The defence isn't to punish them; it's to give them an approved path that's as fast as the unapproved one.

The helpful employee

A customer asks a question over email. The support agent doesn't know the answer, pastes the customer's message and the company's product manual into ChatGPT, and gets a perfect reply. The customer never knew their data went to OpenAI. The agent thought they were doing their job.

This is the case that scares regulators the most. Under GDPR, the agent just made the company an unwitting joint controller with OpenAI for that customer's personal data. The Article 28 paperwork doesn't exist. The lawful basis isn't recorded. The data subject was never informed.

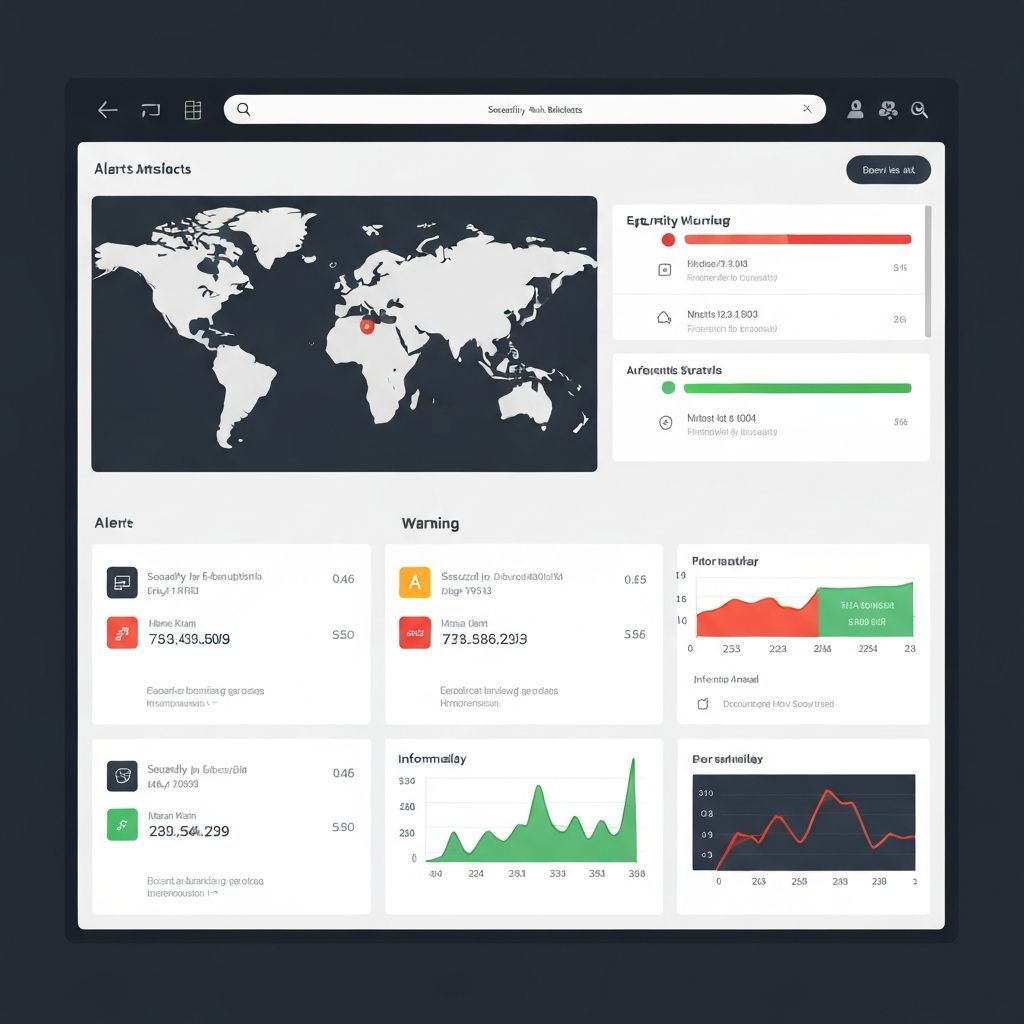

What traditional DLP misses

Most enterprise DLP was designed for the email-and-USB-drive era. It looks for sensitive data in outbound channels it knows about: SMTP, HTTPS to known SaaS vendors, file uploads via the corporate proxy.

Generative AI breaks every assumption:

- The transport is HTTPS to a CDN. Your DLP can't inspect TLS to OpenAI's frontend without performing a man-in-the-middle that breaks browser warnings.

- The user might be on personal Wi-Fi. Or 5G. Or a personal device. None of those go through your proxy.

- The user might be on a personal account. No SSO. No corporate audit log. No way to attribute the action to the user inside the SaaS.

- The data shape is unstructured prose. DLP signatures designed to spot credit-card numbers don't spot a paragraph that summarises the company's acquisition strategy.

The honest answer is that perimeter DLP can't see most AI-driven data loss. The only place to catch it is at the device — at the moment the prompt is being typed.

What actually works

A practical defence stack:

- Device-level prompt visibility. Browser extensions and desktop apps that capture what users actually type into AI tools. Bypass-proof: works whether the user is on corporate Wi-Fi, mobile data, or a personal account.

- Content classification at the prompt boundary. Detect customer PII, payment data, financial figures, source code, contract language — before the prompt is submitted, not after.

- Real-time nudges, not just blocks. The most effective intervention is a soft warning at the point of paste — “this looks like customer data; the approved tool is X.” Blocks generate workarounds; nudges generate behaviour change.

- An approved alternative that's as fast. Employees route around restrictions when the approved path is slower. Pair every block with a one-click alternative on a sanctioned tool.

- Audit trail tied to identity. Every prompt, every response, every approval — tied to the user account, retrievable on demand for an investigation.

That's the stack we built into Prompt Shields. Not because the tech was novel — most of the primitives have existed for years — but because nobody had assembled them specifically for the generative-AI era.

Where to start

You can patch models. You can't patch curiosity.

- Need device-level visibility now? The Atlas AI Insight Platform ships browser extensions and desktop apps for macOS, Windows, Chrome, Safari and Edge. Captures actual prompts, classifies sensitive content, and maps everything to the AI use-case register your auditor is going to ask about.

- Need to update the policy too? The 8-week AI Governance & Risk Assessment includes insider-risk-aware policy templates that account for the new failure modes.