Everyone wants to be an AI pioneer. Nobody wants to read the controls spreadsheet.

I've sat in a hundred AI strategy meetings in the last twelve months. The pattern is identical: the room gets excited about what AI could do, jumps straight to the procurement decision, and then — six months later — discovers that the “AI rollout” is actually fifty unmanaged tools, no risk owner, and a compliance officer asking awkward questions before the next board meeting.

The pioneer instinct is right. The execution model is wrong.

What gets bought first

The shiny layer always sells:

- Chatbots

- AI agents

- Vibe-coding platforms

- “AI strategy” slide decks

- Someone in the room saying “we need to move fast”

None of these are bad. The problem is that they're bought as a substitute for the boring layer underneath — the inventory, the policy, the audit trail. And that substitution is what turns a programme into an incident report.

What's actually happening underneath

Six months after the AI strategy off-site, here's what we usually find when we run the discovery:

- No AI inventory. Nobody can produce a list of every AI tool in use. The CIO has a partial list. IT has a different partial list. Procurement has a third. None of them include the personal ChatGPT accounts the team uses every day.

- No prompt controls. Anyone can paste anything into any AI tool. Customer lists. Contracts. Internal financial figures. Source code. Health records. The DLP your IT bought in 2019 doesn't inspect generative-AI traffic.

- No ownership. When asked “who owns the AI hiring tool?”, the answer is “the HR team.” That's not ownership. That's a department.

- No audit trail. Three months from now, when an auditor asks “what AI was used to process this customer record?”, the answer is “we don't know.”

- Gary from Sales connected 14 tools to Google Workspace. See below.

Meet Gary from Sales

Gary is real. He's on every customer's list. He found a few productivity tools at a sales conference, signed up with his work Google account, granted them OAuth access to Workspace, and now there are fourteen third-party AI assistants reading his calendar, his email, his Drive, and — by extension — his company's contact list, draft contracts, and quarterly forecast.

Gary didn't do anything wrong. He moved fast. He's the AI pioneer the strategy off-site asked for. He's also the audit finding the auditor will lead with.

How innovation becomes an incident report

Every AI incident I've seen in the last year follows the same shape:

- A team adopts an AI tool to solve a real problem. Productivity goes up.

- The tool gets adopted by adjacent teams. Usage scales horizontally without anyone re-doing the security review.

- Someone uses the tool with sensitive data. There's no policy that says they shouldn't — or the policy exists in a Confluence page nobody reads.

- A customer notices something off. Or a journalist does. Or a regulator. Or — the most common one — the data appears in an AI vendor's training set.

- The CISO is on a call by 6pm explaining what happened. They don't know yet, because nobody has the inventory.

The incident is downstream of three things being missing: visibility, ownership and policy. Each one compounds the others. Without visibility you can't assign owners. Without owners, policy is theoretical. Without policy, visibility is just a bigger spreadsheet.

Speed with brakes, steering and insurance

The truth: AI success is not just speed. It is speed with brakes, steering and insurance.

The fastest cars on the planet have the best brakes. Formula 1 cars don't go fast despite the safety engineering — they go fast because of it. The carbon-fibre survival cell, the brake-by-wire system, the tyre temperature sensors, the driver's HANS device: every safety system makes the car go faster because the driver can push the limit knowing the failure mode is bounded.

AI in the enterprise works the same way. Without controls, every team plays defensively because nobody knows what's safe. With controls, the business can experiment confidently because the failure mode is bounded. Counter-intuitive, but every well-run AI programme we've seen is faster than the one that skipped the controls.

What the controls spreadsheet actually contains

Nobody wants to read it because it sounds boring. Here's what's actually in it:

- The use case register — every AI tool in active use, what it does, what data it touches, who uses it.

- The risk classification — for each use case, EU AI Act risk tier, OWASP LLM Top 10 exposure, NIST AI RMF mapping, ISO 42001 control coverage.

- The owner per use case — a real human name, not “the team.”

- The mitigation status — for each identified risk, what control is in place and when it was last validated.

- The audit pack — the evidence you'd hand a regulator on a Tuesday morning.

That's it. Five things. Not a thousand-row matrix. Five questions answered for every AI use case. The spreadsheet sounds boring because the language is — but the content is the difference between a programme that scales and a programme that becomes a press release.

Five controls that pay for themselves

If you have to start somewhere, start with these five:

- A live discovery feed. Browser extensions and a desktop app that capture actual AI usage, not a self-reported survey. Pays for itself the first time an auditor asks for the AI inventory.

- A named owner per use case. The single highest-leverage control because it forces accountability conversations to happen before the incident, not after.

- A simple intake workflow. Anyone introducing a new AI tool answers five questions. Takes ten minutes. Stops 80% of the rogue-tool problem.

- Prompt-content scanning. What sensitive data has crossed the boundary into external AI tools in the last 30 days? Most companies can't answer this. The first month of data is usually alarming.

- Framework-mapped reporting. Every use case scored against EU AI Act, NIST, ISO 42001, OWASP LLM Top 10. Live. Not a quarterly snapshot.

Where to start

If your AI programme currently runs on vibes and optimism, two ways to bring it under control:

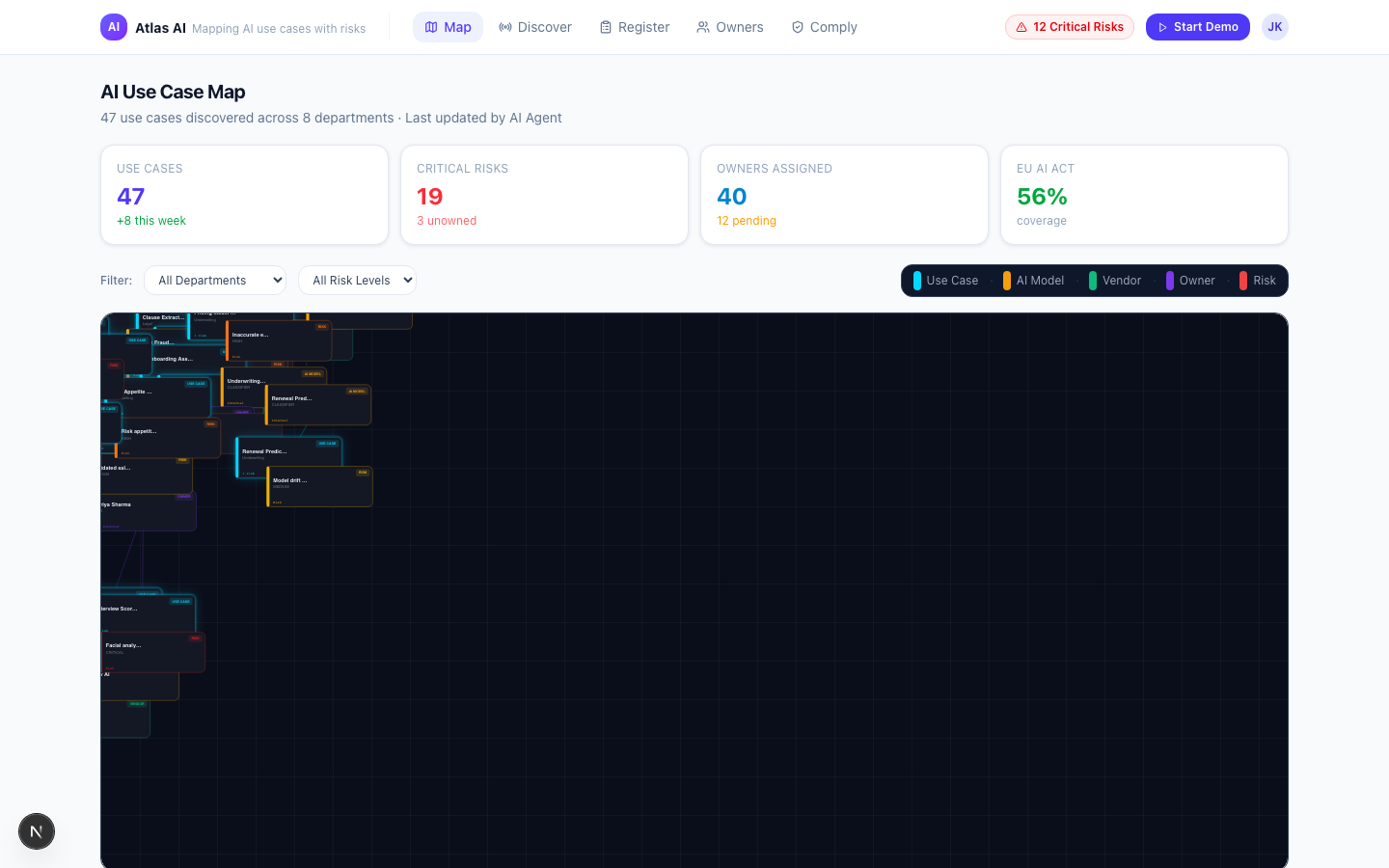

- Already have an AI strategy but no controls? Run a 4-week pilot of the Atlas AI Insight Platform. By Day 30 you have a live AI register, ownership per use case, and the audit-ready evidence pack.

- Don't have the programme yet? Start with our 8-week AI Governance & Risk Assessment. Policy, operating model, framework mapping, board-ready output.

AI pioneers are great. AI pioneers with brakes, steering and insurance are the ones who actually arrive.