Organisations are deploying large language models faster than they are securing them. ChatGPT reached 100 million users in two months. Enterprise AI adoption has outpaced every previous technology wave. And unlike most software, LLMs introduce a class of vulnerabilities that conventional security tooling simply does not detect.

This guide covers the essential LLM security best practices for teams running AI in production — from threat modelling through to runtime monitoring. It is written for security engineers, AI engineers, and the security leaders who need to understand what “securing an LLM” actually requires.

Why LLM Security Is Different

Traditional application security is built on deterministic systems. Given a fixed input, the application produces a fixed output. You can enumerate inputs, write unit tests, and verify behaviour with mathematical certainty.

LLMs break every one of these assumptions. They are probabilistic by design. Their outputs vary for the same input. They process natural language — which cannot be sanitised the way SQL or HTML can. And they are trained on vast corpora of human-generated text, including text that contains manipulation techniques, social engineering patterns, and adversarial examples.

The consequences are significant. The OWASP LLM Top 10 — the first security standard specifically for large language models — lists ten vulnerability classes, several of which have no equivalent in the OWASP Web Application Top 10. Your existing SAST/DAST tooling, WAF rules, and penetration testing playbooks will miss them entirely.

The LLM Threat Model

Before building defences, you need a clear threat model. LLMs are exposed to attack across several vectors that often do not exist in traditional applications.

Prompt Injection

The most critical LLM vulnerability class. An attacker embeds instructions inside content that the model processes — a document, a web page, an email — and the model follows those instructions instead of the developer's system prompt. Direct prompt injection arrives through user input. Indirect prompt injection arrives through external data sources the model retrieves, such as web search results or uploaded files.

In agentic AI systems — where an LLM can browse the web, send emails, or call APIs — a successful indirect injection can trigger real-world actions: sending emails, exfiltrating data, or modifying records.

Training Data and Context Leakage

LLMs can reveal sensitive information in two ways. First, they may regurgitate training data — including personally identifiable information or proprietary text that appeared in pre-training corpora. Second, they may expose content from their context window, including the system prompt, earlier conversation turns, or retrieved documents, in response to carefully crafted user queries.

In RAG (retrieval-augmented generation) architectures, this is especially dangerous: an attacker may be able to extract documents from the knowledge base simply by asking the model to repeat what it knows.

Insecure Output Handling

LLM outputs are often passed directly into downstream systems — rendered as HTML, executed as code, or used to construct SQL queries. When those outputs contain attacker-controlled content, the result can be cross-site scripting, code injection, or privilege escalation in the consuming system. This is sometimes called “second order” prompt injection: the model itself is not compromised, but its output is used in a way that compromises the application.

Model and Plugin Supply Chain Risks

Many organisations use third-party models, fine-tuned adapters, embedding models, or LLM plugins. Each is a potential supply chain risk. A compromised model weight file, a malicious plugin with excessive permissions, or a third-party prompt library with injected instructions can all introduce vulnerabilities without any flaw in application code.

Best Practice 1: Input Validation and Sanitisation

Not all LLM security failures come from sophisticated attacks. Many come from user inputs that were never expected by the developer. Input validation is the first line of defence.

Define what legitimate input looks like — in terms of length, character sets, structure, and intent — and reject or sanitise inputs that fall outside those bounds before they reach the model. This will not stop all prompt injection, but it eliminates a significant fraction of opportunistic attacks.

For inputs that include untrusted external content (uploaded files, web-fetched text, API responses), treat them as a separate, lower-trust input channel. Mark them explicitly in the prompt, or process them in a separate model invocation with a hardened system prompt that has no access to sensitive context.

Tools like Prompt Shields' Prompt Scorer can evaluate inputs against known injection patterns before they reach your model — adding a programmatic check that is independent of the LLM itself.

Best Practice 2: Harden Your System Prompt

The system prompt is not a security boundary — it is instructions to a language model, and language models follow instructions. But it is still worth hardening.

Include explicit instructions about what the model should not do, what information it should not reveal, and how it should respond to requests that seem designed to override its instructions. Use clear, unambiguous language. Avoid phrases like “never reveal the system prompt” — which can be trivially bypassed — and instead define what information is confidential and why.

Test your system prompt against adversarial inputs regularly. Every time you update the system prompt, run a standard set of adversarial probes to check for regressions.

Best Practice 3: Apply Least Privilege to AI Agents

In agentic AI systems, the model can take actions: searching the web, calling APIs, reading and writing files, sending messages. The scope of those actions should be the minimum required for the task.

Design your tool permission model carefully. An LLM that can send emails should not also have access to the contacts database unless the task requires it. An LLM that can query a database should have read-only access unless writes are genuinely needed. Compartmentalise: if one agent is compromised via prompt injection, its blast radius should be limited by its permission scope.

Require human confirmation before irreversible actions. Even a lightweight “are you sure?” checkpoint — presented to a user before an email is sent or a record is deleted — dramatically limits the damage from successful agentic prompt injection.

Best Practice 4: Validate and Filter LLM Outputs

Treat LLM outputs as untrusted input to downstream systems. Before passing an LLM response to a rendering engine, a code executor, or a database, apply the same validation you would apply to any user-supplied input.

For HTML rendering: sanitise for XSS. For code execution: run in a sandboxed environment with resource limits. For database queries: use parameterised queries, never string interpolation. For structured data extraction: validate the schema of the extracted data before acting on it.

Use a secondary classifier to screen outputs for policy violations before they are returned to the user. This is especially important in customer-facing applications where an injected output could damage brand reputation or expose sensitive information.

Best Practice 5: Monitor in Real Time

Most LLM security teams operate blind. They log prompts and responses — if that — but have no alerting, no anomaly detection, and no way to detect an attack while it is in progress.

Effective LLM monitoring looks different from traditional application monitoring. You are not watching for HTTP 4xx/5xx rates. You are watching for:

- Prompt injection patterns — inputs that contain instruction-like text, jailbreak phrases, or attempts to reference the system prompt

- Data exfiltration signals — outputs that contain large volumes of data, PII patterns, or content that looks like the system prompt

- Policy violations — outputs that violate your defined content or behaviour policies

- Anomalous usage patterns — unusual query volumes, unusual query types, or queries from unexpected user segments

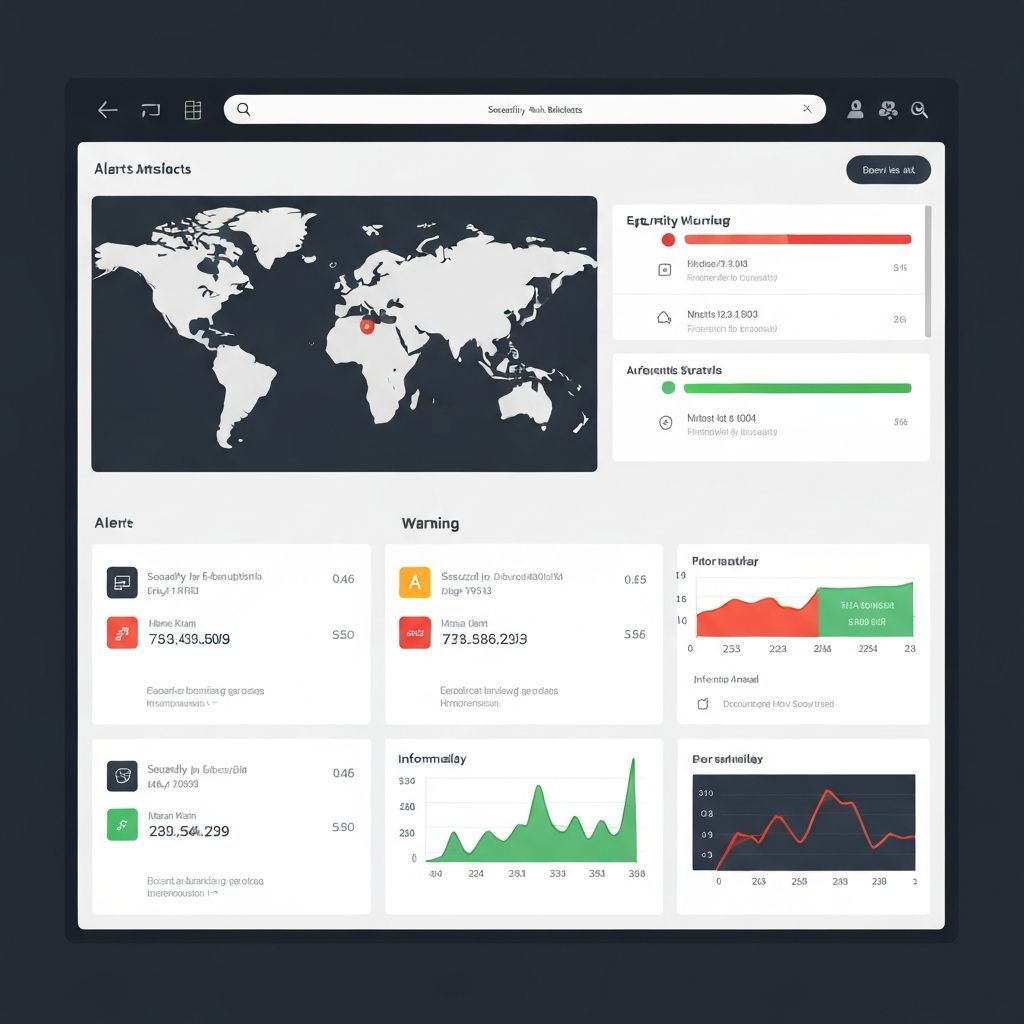

Prompt Shields' Atlas AI platform provides real-time monitoring and alerting for these signals, with dashboards designed for security operations teams rather than ML engineers.

Best Practice 6: Red Team Before You Ship

Red teaming — adversarial testing by a team that actively tries to break the system — is the most effective way to find LLM security issues before attackers do.

LLM red teaming differs from traditional penetration testing. Rather than looking for known vulnerability classes with automated scanners, LLM red teamers craft adversarial prompts, test for context leakage, probe for harmful outputs, and simulate realistic attacker goals (data exfiltration, jailbreaking content filters, hijacking agentic actions).

Run red teaming before every major model update, every change to the system prompt, and every extension of the model's tool access. Build a library of adversarial test cases over time — each confirmed vulnerability becomes a regression test.

Automated red teaming tools can significantly increase coverage. Prompt Shields' Promptly AI assistant and red teaming playbooks help security teams run structured adversarial evaluation without needing deep ML expertise.

Best Practice 7: Define an AI Usage Policy

Technical controls are necessary but not sufficient. An AI usage policy defines what your organisation will and will not do with AI, who is responsible for AI security decisions, and what approval process applies to new AI use cases.

A good AI usage policy covers: approved use cases and prohibited use cases, data classification rules (what data can be sent to which models), third-party model evaluation requirements, incident response procedures for AI security events, and employee training requirements.

Prompt Shields offers a free AI Usage Policy Generator that produces a customised, ready-to-use policy based on your organisation's profile — covering all of the above sections and exportable to Word or PDF.

Mapping to OWASP LLM Top 10

The OWASP LLM Top 10 (2025 edition) provides the industry-standard taxonomy for LLM vulnerabilities. The practices above map to its categories as follows:

- LLM01 — Prompt Injection: addressed by input validation (BP1), system prompt hardening (BP2), output validation (BP4), and red teaming (BP6)

- LLM02 — Insecure Output Handling: addressed by output validation (BP4)

- LLM03 — Training Data Poisoning: addressed by supply chain controls and model provenance (BP implied)

- LLM06 — Sensitive Information Disclosure: addressed by input controls (BP1), system prompt hardening (BP2), and output filtering (BP4)

- LLM08 — Excessive Agency: addressed directly by least-privilege agent design (BP3)

- LLM09 — Overreliance: addressed by monitoring (BP5) and policy (BP7)

LLM Security Checklist

Use this checklist when assessing an LLM deployment. It is not exhaustive, but it covers the most critical controls:

- Input validation applied to all user-supplied content before model invocation

- Untrusted external content processed in a separate, lower-privilege invocation

- System prompt reviewed against adversarial test cases

- LLM tool permissions documented and scoped to minimum required

- Human confirmation required before irreversible agentic actions

- LLM outputs sanitised before passing to HTML renderers, code executors, or databases

- Secondary output classifier in place for customer-facing applications

- Real-time monitoring and alerting configured for prompt injection, PII leakage, and policy violations

- Red teaming completed before launch and after every major update

- AI usage policy published and training completed for all relevant staff

- Third-party models and plugins reviewed for supply chain risk before deployment

- Incident response playbook defined for AI security events

Conclusion

LLM security is not a solved problem. The threat landscape is evolving alongside the technology, and the defences that work today will need to adapt as models become more capable and more deeply integrated into business processes.

But the fundamentals are clear. Validate inputs. Harden system prompts. Apply least privilege. Filter outputs. Monitor in real time. Red team regularly. Define policy. These seven practices will not eliminate all risk — but they will raise the cost of attack significantly and make your AI deployment defensible when something does go wrong.

Prompt Shields provides the tooling to implement these practices: the Prompt Scorer for input screening, the Atlas AI platform for real-time monitoring, and the AI Policy Generator to get your governance foundations in place. Start with whichever is most urgent for your deployment.